Learning Objectives #

In SKYVVA, the bulk-loading option increases the performance of a session where huge volumes of data are involved. During bulk loading, the Integration Service bypasses the database log, which results in performance improvement. Without writing to the database log, however, the target database cannot perform a rollback. While using bulk loading, the need for improved session performance must be weighed against the ability to recover an incomplete session.

Introduction #

The Data Integration Service can use the SKYVVA Bulk API to write data to sObjects. Use the Bulk API to write large amounts of data to Salesforce with a minimal number of API calls. Users can use the Bulk API to write data to Salesforce targets with Salesforce API version 31.0.

With a Bulk API write, each batch of data can contain up to 10,000 records or one million characters of data in CSV format. When the Data Integration Service creates a batch, it adds required characters such as quotation marks around text, to format the data.

Users can configure a Bulk API target session to load batches serially or in parallel. By default, the data load is in parallel mode, but you can override the data load to serial mode. Users can also monitor the progress of batches in the session log.

To configure a session to use the Bulk API for Salesforce targets, select the Use SKYVVA Bulk API session property. When the user selects this property, the Data Integration Service ignores the Max Batch Size session property.

SKYVVA Bulk Interface Processing #

SKYVVA Bulk Interface Processing is an interface uses for running bulk attachments. When the user has much data, using Bulk Interface Processing is necessary (over 5000 records). Before configuring Bulk Interface Processing, the user has to know about the parameters:

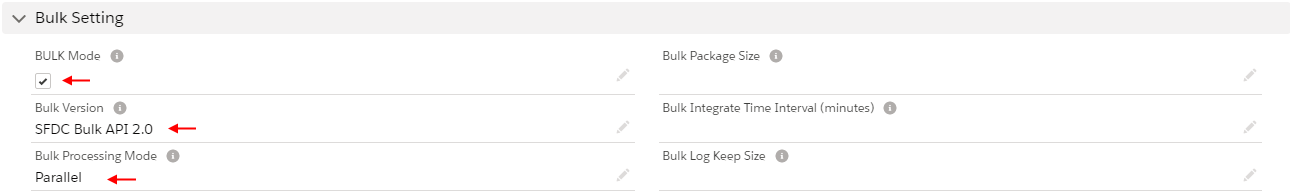

BULK Mode: Check this flag if you want to run interface in a bulk mode using Salesforce BULK API.

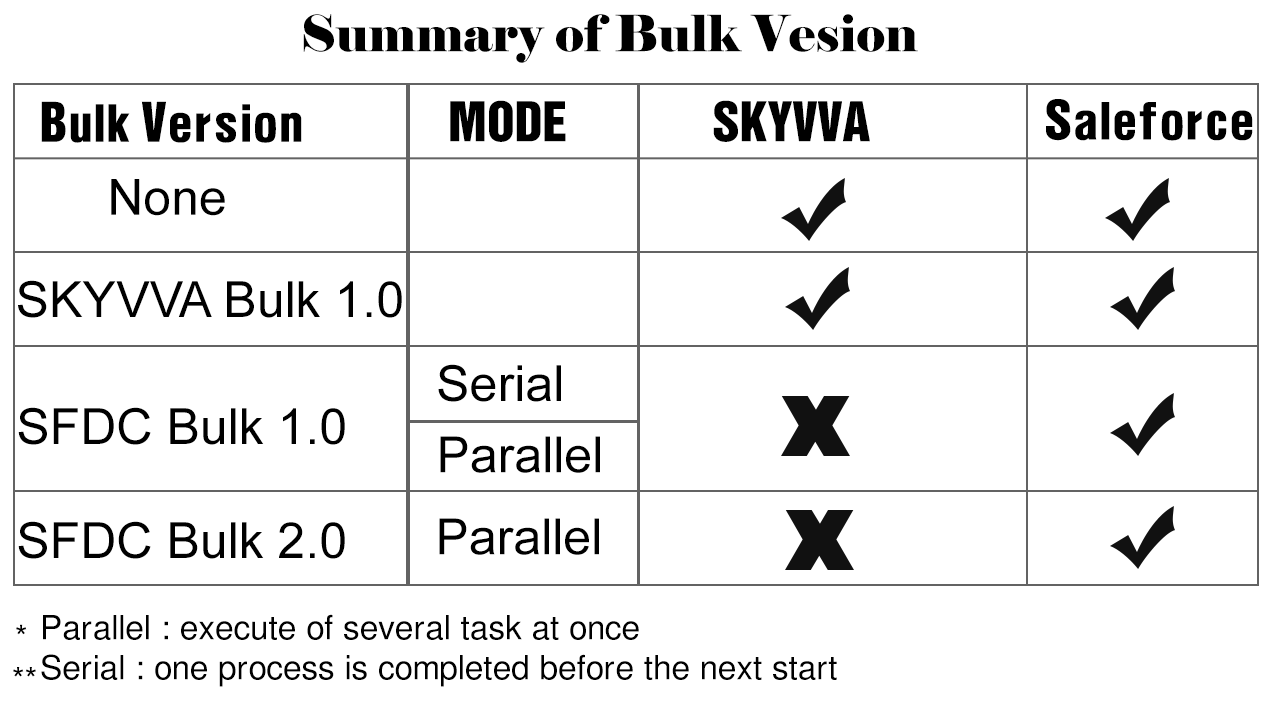

Bulk Version: We support SFDC pure bulk mode in version 1.0 or 2.0. Then we have our own SKYVVA bulk mode. This uses SKYVVA workflow and mapping and thus is more powerful but slower than the pure bulk API technique from Salesforce. This one is SKYVVA’s own bulk mode.

Bulk Processing Mode: This field indicates the two-mode, which is support with bulk version 1.0. With bulk, version 2.0 only parallel mode is supported. Therefore, the value here depends on the selection of the field bulk version e.g. when we want to use ‘Serial’ then we need to choose ‘Bulk V 1.0’. There are two modes which are:

In the figure, we showing SKYVVA BULK Processing mode which includes Parallel and series mode.

In the figure, we showing SKYVVA BULK Processing mode which includes Parallel and series mode.

Parallel: When you select this processing mode, it will run parallel.

Serial: When you select this mode, it will not run at the same time.

Bulk Package Size: This parameter determines how many records are split into Bulk data size. If your Message contains 10.000 records and the value for this parameter is 1000 so the user will get 10 bulk data set in Salesforce.

Bulk Monitor Keep Size: The number of bulk executes logs to be kept.

Bulk Search Frequency: This is the schedule time frequency for the bulk scheduler on the interface.

Bulk Integrate Time Interval (minute): The interval (minutes) used to integrate bulk job scheduler. For example, if its value is 10, then the integrate bulk scheduler will be run every 10 minutes.

Difference Between Normal and Bulk Mode? #

| Normal Mode | Bulk Mode |

| In normal mode, the Integration service writes into the database logs during the job run. | In Bulk Mode, the Integration services bypass the database logs during job run for improved performance. |

| Since the database logs are available, the job recovery is possible during failure. | Since the database logs are bypasses, job cannot be recovered during failure. |

| Indexes, constraints, auto-generated features can be available in table level during the job run. | During Bulk load, indexes, constrains and auto generating features should not configured in table. |

#

Difference Between SKYVVA Bulk and SFDC Bulk Mode? #

| SKYVVA Bulk Mode | SFDC Bulk Mode |

| In SKYVVA Bulk Mode user can create upsert, update, insert, and query, query All, pullQuery or delete a large volume of records with the Bulk API, which is optimized for processing large sets of data. It makes it simple to load, update, or delete data over a 5000 records. | In SFDC Bulk Mode user can create update, or delete a large volume of records with the Bulk API, which is optimized for processing large sets of data. It makes it simple to load, update, or delete data from a few thousand to millions of records. |

| Monitoring in Bulk Control Board: As user did integrate, your data you might want to check or monitor if all your data are successfully integrated. To monitor bulk data. There are three orders to monitor bulk processing: Bulk Data Inbox : When data pushed from the client using Bulk API, that will appear in this section Bulk Data Processing: Bulk Data Processing is using to store data that are processing. Bulk Monitor: User can monitor the total records of attachments, total batch and batches that had processed. |

Monitoring Bulk Data Load Jobs: Process a set of records by creating a job that contains data that will be processed asynchronously. The job specifies which object is being processed and what type of operation is being used. |

| Indexes, constraints, auto-generated features can be available at table level during the job run. Operations: The processing operation for all the batches in the job. The valid values are: delete insert query queryall upsert update Delete It supports SFDC BulkV1.0, SFDCV2.0 for all Job Type |

Operations: The processing operation for all the batches in the job. The valid values are: delete insert query (Bulk V1 type jobs only) queryall(Bulk V1 type jobs only) upsert update hardDelete (Bulk V1 type jobs only) It also supports BulkV2 for some Job Type |

Advantages #

- The major advantage of using SKYVVA bulk load is in the significant improvement of performance. Especially in the large volume table, bulk loading speeds up the process.

- If the SKYVVA job fails, the recovery is not possible due to the bypass of database logs. The only option during job failure is truncate and reprocess.

For detail information:

https://trailhead.salesforce.com/en/content/learn/modules/api_basics/api_basics_bulk

How to Use Bulk Mode SKYVVA and SFDC Bulk with Agent? #

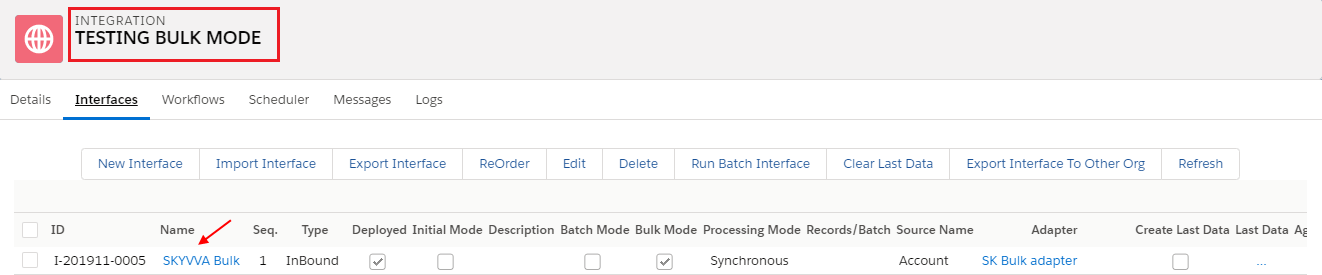

CASE 1: Using SKYVVA Bulk version

- Create Integration

- Create Interface

- Click on Interface

- Scroll down to Bulk Setting section

- Check flag of BULK Mode

- Select SKYVVA Bulk1.0 value from picklist

- Save

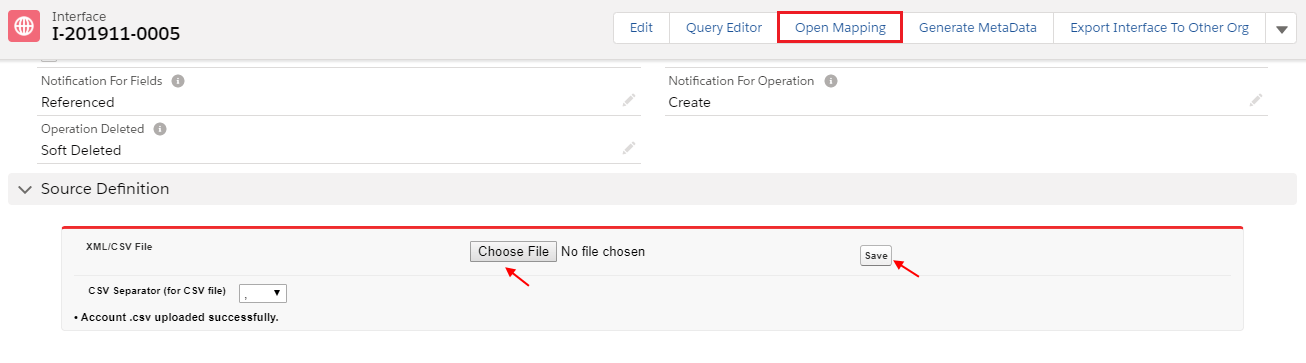

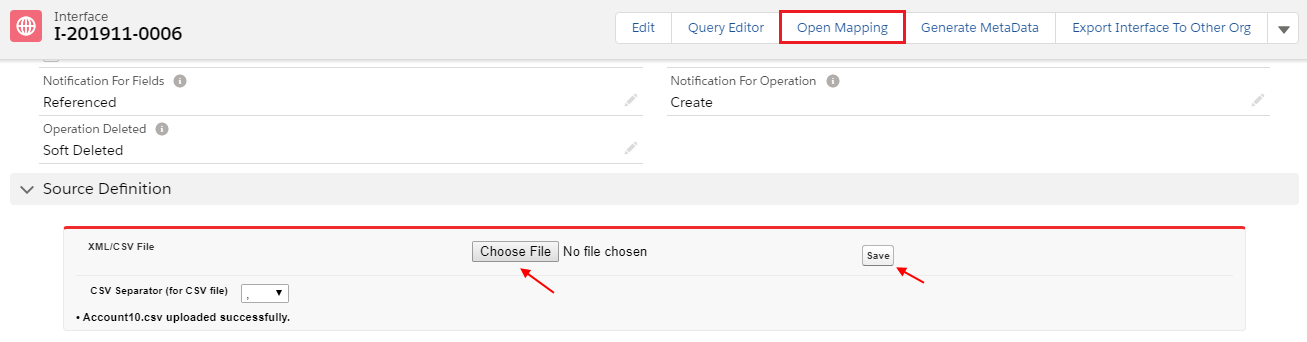

- Scroll down to Source Definition section

- Choose file & Save

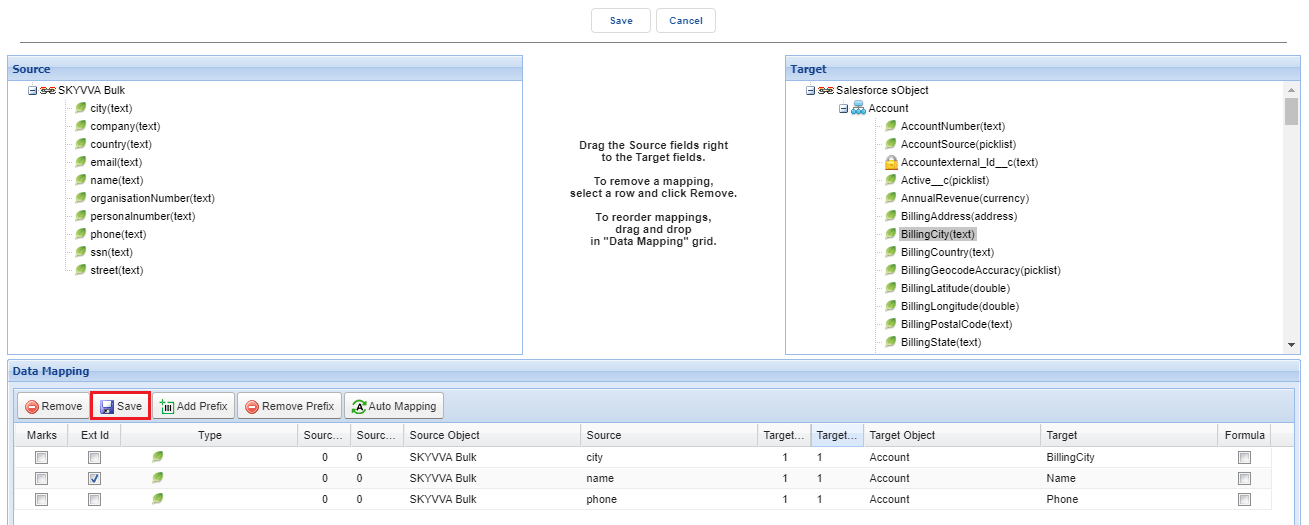

- Click on Open Mapping Button

- Select Ext Id

- Save

Go to SKYVVA Agent configure with your Salesforce Org. Which is obvious

- Select Integration Name

- Select SKYVVA Bulk Interface

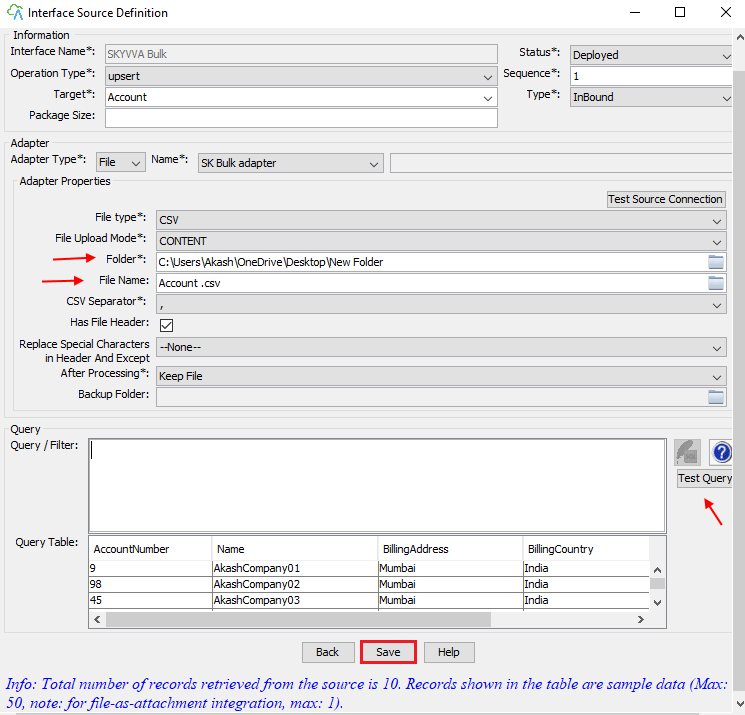

- Click on Edit Interface

- Select File Folder

- Write File Name

- Click on Test Query

- Save

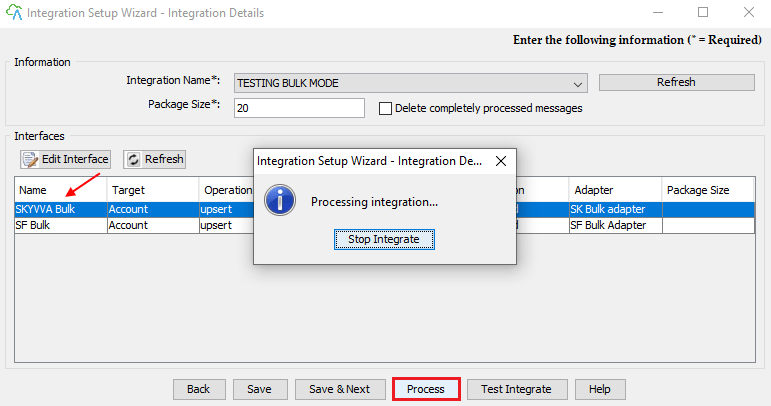

- Select your Interface SKYVVA Bulk

- Click on Process button

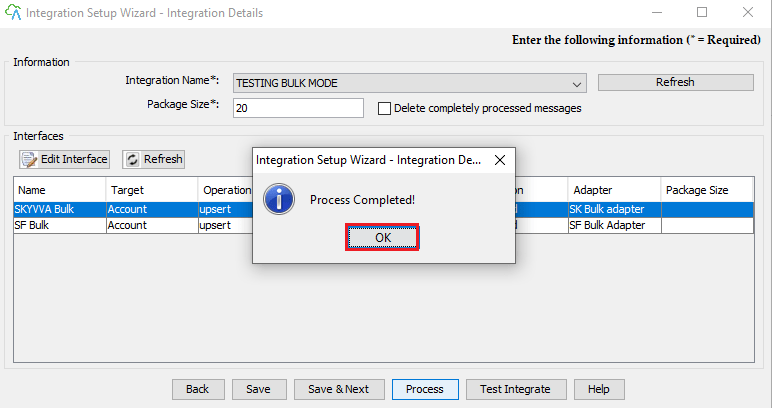

- Process Completed

- Click ok button

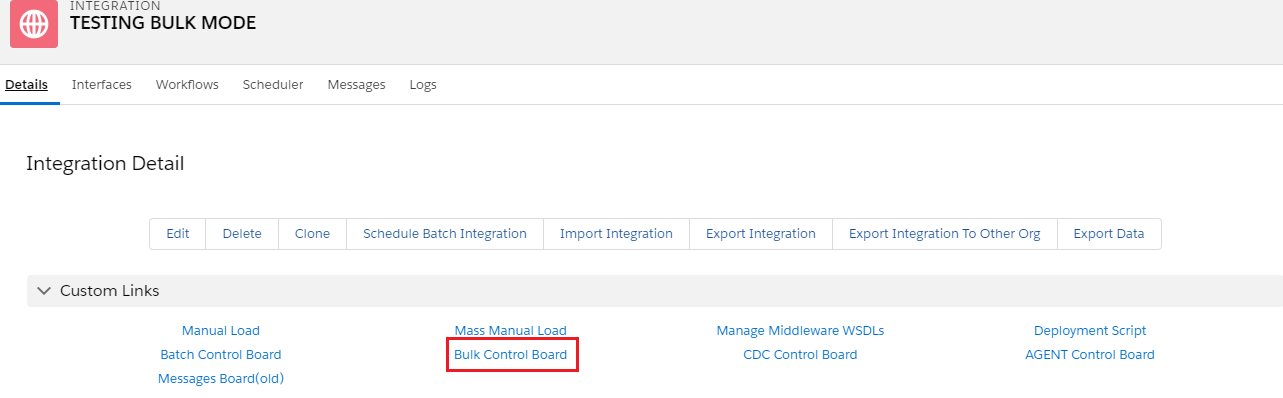

Go back to your Salesforce Org.

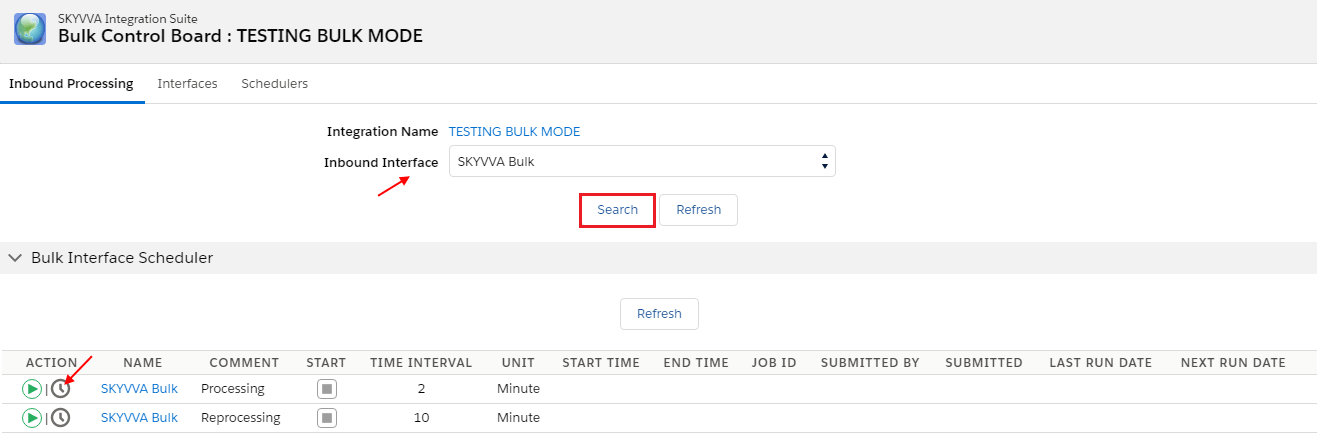

- Go to Integration Detail page

- Click on Bulk Control Board

- Drop down and select your Inbound Interface

- Click on Search button

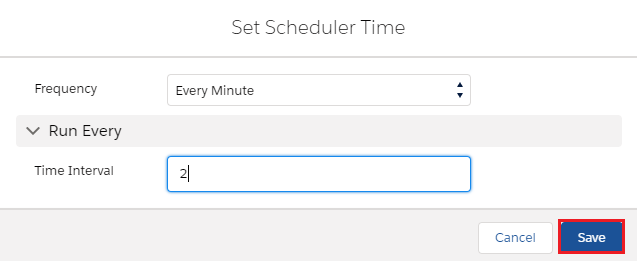

- Now click on timer logo

- Set Time Interval lets play with 2 min.

- Save

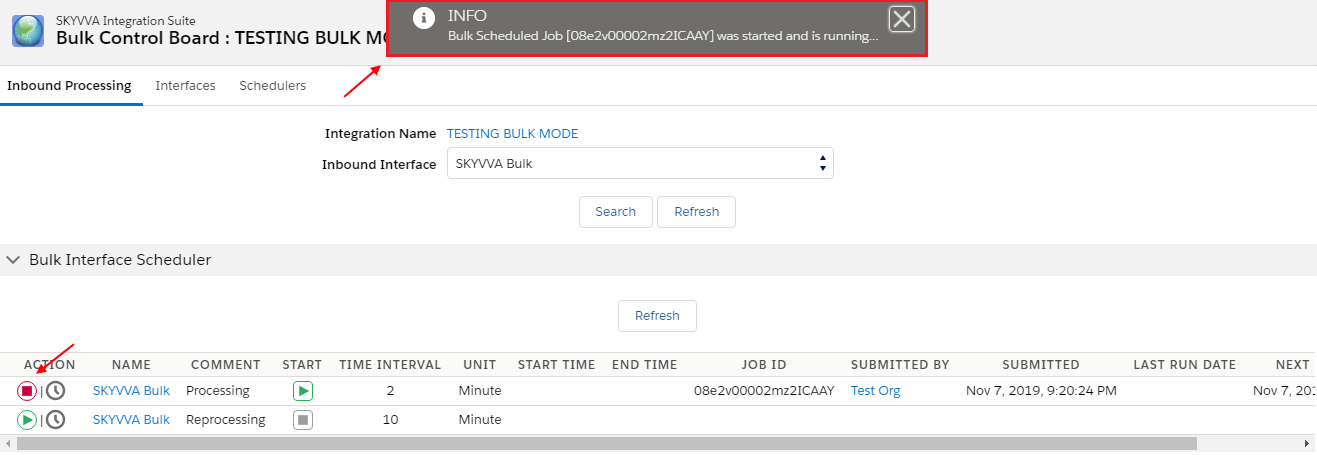

- Click on Action button and start Scheduler

- See message pop up in dialogue box Bulk Schedule Job is running

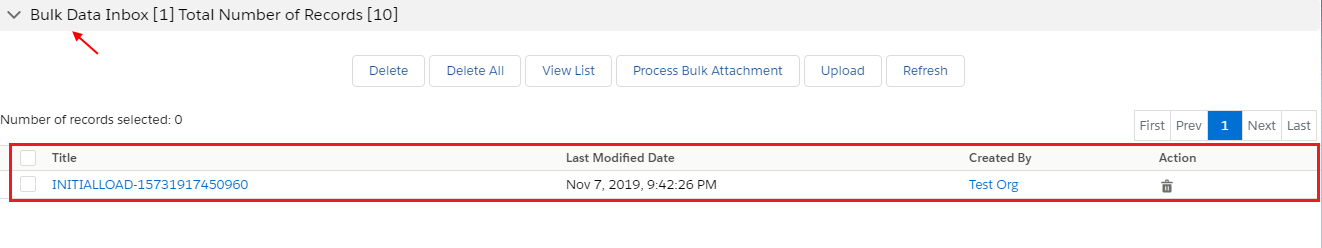

- See Bulk Data Inbox shows total no. of records

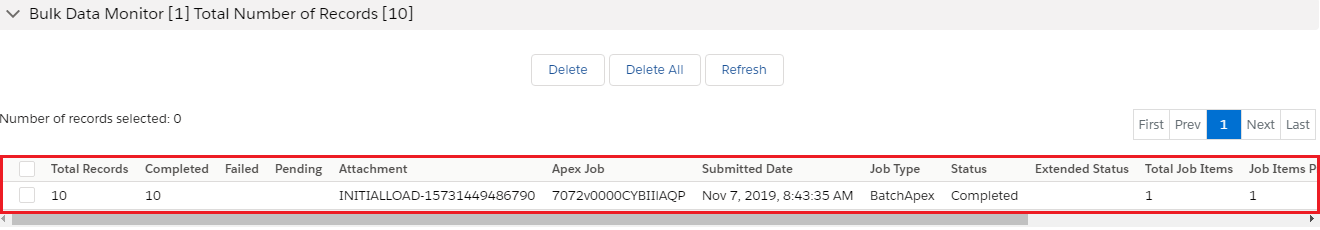

- Bulk Data Monitor shows the no. of records which is 10

- Status Completed

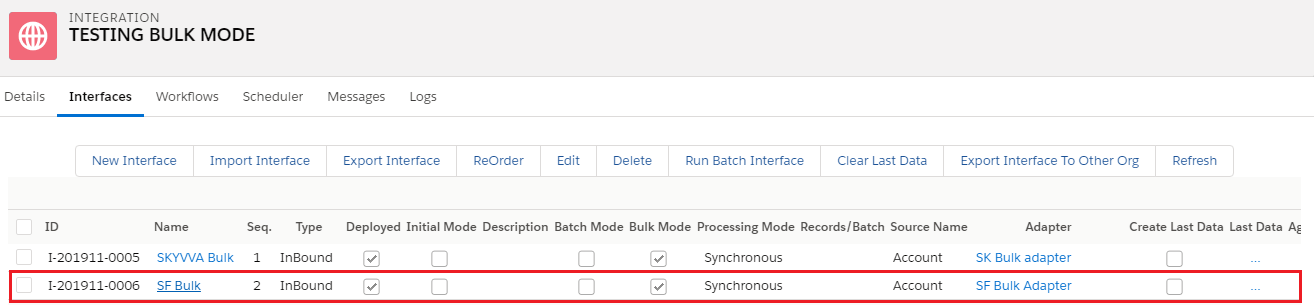

CASE 2: Using SFDC Bulk version

- Create Interface

- Click on Interface

- Scroll down to the Bulk Setting section

- Check flag of BULK Mode

- Select SFDC Bulk API 1.0, SFDC Bulk API 2.0 value from picklist

- Select Bulk Processing Mode Parallel

- Save

- Scroll down to Source Definition section

- Choose file & Save

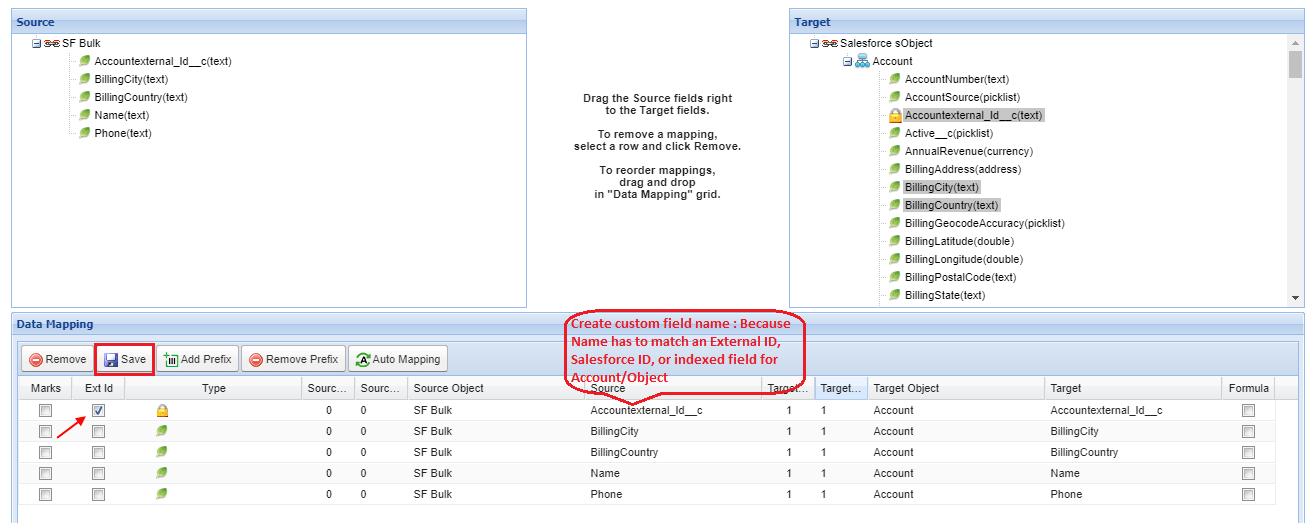

- Click on Open Mapping Button

- Select Custom Ext. Id

- Save

Go to SKYVVA Agent configure with your Salesforce Org. Which is obvious

- Select Integration Name

- Select SF Bulk Interface

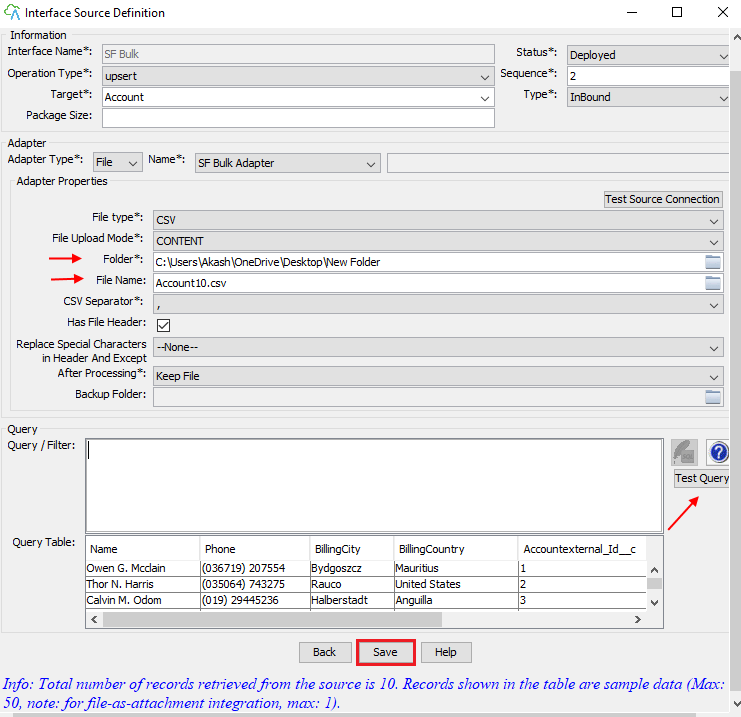

- Click on Edit Interface

- Select File Folder

- Write File Name

- Click on Test Query

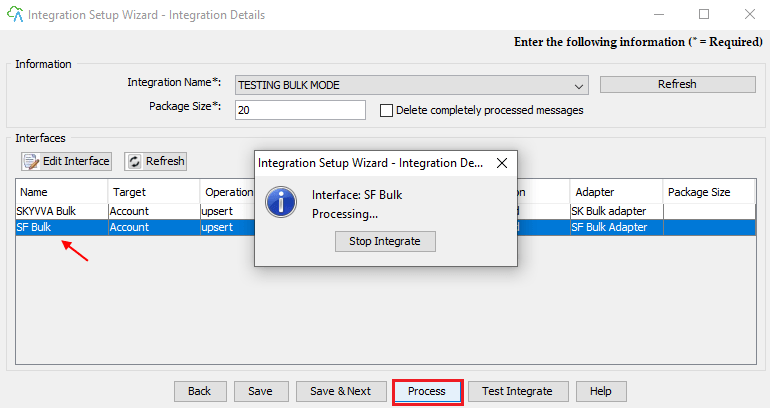

- Select SF Bulk

- Click on Process button

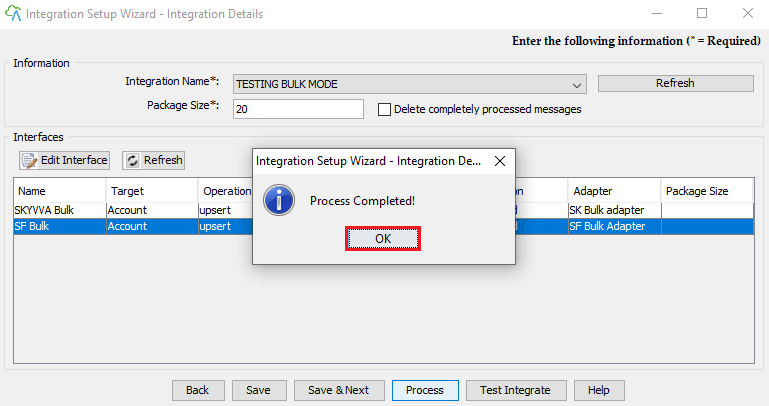

Go back to your Salesforce Org.

- Go Setup

- Write Apex job in search box

- See here Bulk Data Load Jobs

- Status Job Completed