Event-based Technique #

- The source of an event can be from internal or external inputs. Events can generate from a user, like a mouse click or keystroke, an external source, such as a sensor output, or come from the system, like loading a program.

- They do not need to request again and again. To send it individually it takes so much time, here we just needed to upload once and all the subscribers will get it.

- This is the modern way of communication between different applications. It has two components: Publish and subscribe. For example, Netflix, youtube.

- We all use Netflix to watch movies. It uses the Queuing tool and streaming tool. Netflix here plays the role of producer and we play the role of the consumer. Netflix uploads Movie once and all others who are subscribers of Netflix can watch.

- An event is any significant occurrence or change in state for system hardware or software. An event is not the same as an event notification, which is a message or notification sent by the system to notify another part of the system that an event has taken place.

Benefits of event-driven architecture #

- An event-driven architecture can help organizations achieve a flexible system that can adapt to changes and make decisions in real-time. Real-time situational awareness means that business decisions, whether manual or automated, can be made using all of the available data that reflects the current state of your systems.

- Events are captured as they occur from event sources such as Internet of Things (IoT) devices, applications, and networks, allowing event producers and event consumers to share status and response information in real-time.

- Organizations can add event-driven architecture to their systems and applications to improve the scalability and responsiveness of applications and access to the data and context needed for better business decisions.

How does event-driven architecture work? #

SKYVVA Integration is a comprehensive set of integration and messaging technologies to connect applications and data across hybrid infrastructures. It is an agile, distributed, containerized, and API-centric solution. It provides service composition and orchestration, application connectivity and data transformation, real-time message streaming, change data capture, and API management—all combined with a cloud-native platform and toolchain to support the full spectrum of modern application development.

- The event-driven architecture is made up of event producers and event consumers. An event producer detects or senses an event and represents the event as a message. It does not know the consumer of the event or the outcome of an event.

- After an event has been detected, it is transmitted from the event producer to the event consumers through event channels, where an event processing platform processes the event synchronously. Event consumers need to be informed when an event has occurred. They might process the event or may only be impacted by it.

- The event processing platform will execute the correct response to an event and send the activity downstream to the right consumers. This downstream activity is where the outcome of an event is seen

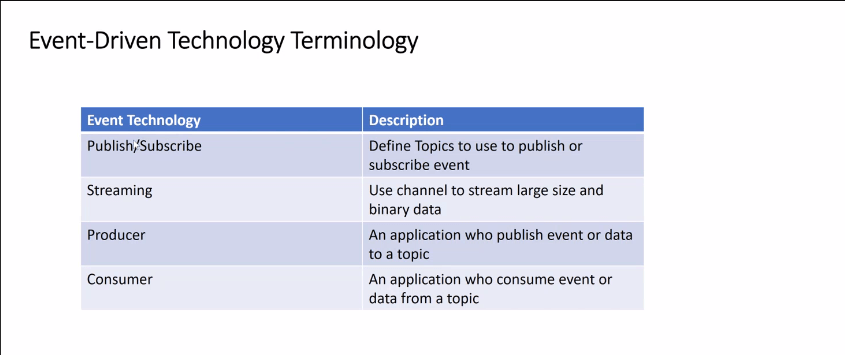

We have 5 main Event terminology.

- Publish

- Subscribe

- Streaming

- Producer

- Consumer

Check the picture below for a description.

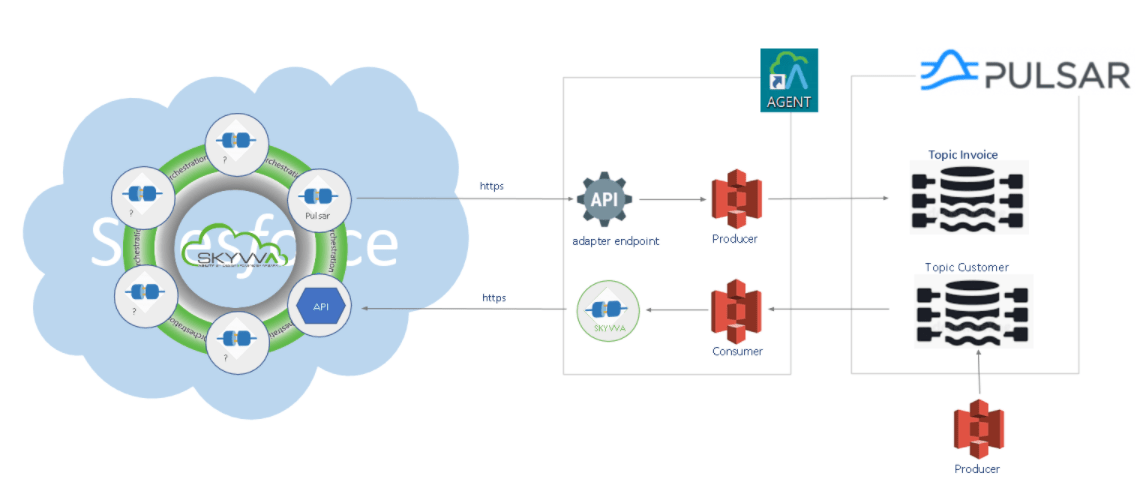

Pulsar Adapter #

We have two Adapter supporting Processing using an event-based technique

Pulsar is basically an Event-based communication. Event-based communication contains two main components: Publish (Producer) and Subscribe (Consumer). The work of a publisher is to publish messages into a queue or topic which is Kafka or Pulsar and the middleware tool like Kafka and Pulsar will maintain the message, they follow the principle of Publish and Subscribe. And they will deliver messages to the consumer. Then all the applications can subscribe. For example, Netflix plays the role of a publisher. and we play the role of the subscriber(Consumer).

- Inbound/Consumer Pulsar Adapter:

We use Inbound Pulsar Adapter when somebody pushes the topic into a topic customer on the Kafka side, then we are consuming. We have an event-driven listener which is a camel consumer. We don’t need a scheduler. We can consume immediately. This is the Inbound process because we are receiving data here.

- Outbound/Producer Pulsar Adapter:

We need an Outbound Pulsar Adapter when we want to send data out from salesforce, here We need to create a topic first. From salesforce, we can send data messages to the API endpoint of our agent, and then we have a camel producer to publish into the topic invoice, which is on the Kafka side. This is the outbound process because we are sending data.

Check the picture given below for reference.

– How to use an Agent Pulsar adapter for consumers?

– How to use an Agent Pulsar adapter for Producer?

Kafka Adapter #

- Kafka Adapter is a distributed data streaming platform that is a popular event processing choice. It can handle publishing, subscribing to, storing, and processing event streams in real-time.

- Apache Kafka supports a range of use cases where high throughput and scalability are vital, and by minimizing the need for point-to-point integrations for data sharing in certain applications, it can reduce latency to milliseconds.

Kafka is an Event-based communication. Event-based communication contains two main components: Publish (Producer) and Subscribe (Consumer). The work of a publisher is to upload a message into a queue or topic, which is Kafka or Pulsar, and the middleware tool like Kafka and Pulsar will maintain the news; they follow the principle of Publish and Subscribe. And they will deliver messages to the Consumer. Then all the applications can subscribe. For example, Netflix plays the role of a publisher. And we play the part of the subscriber(Consumer).

We have two Adapter supporting Processing using an event-based technique

- Inbound Kafka Adapter:

We use Inbound Kafka Adapter when somebody pushes the topic into a topic customer on the Kafka side; then we are consuming. We have an event-driven listener, which is a camel consumer. We don’t need a scheduler. We can consume immediately. This is the Inbound process because we are receiving data here. It plays the role of the Consumer.

- Outbound Kafka Adapter:

We need Outbound Kafka Adapter when we want to send data out from salesforce; here, We need to create a topic first. From salesforce, we can send data messages to the API endpoint of our Agent, and then we have a camel producer to publish into the topic invoice, which is on the Kafka side. This is the outbound process because we are sending data out here. It plays the role of Producer.

Check the picture given below for reference.

– How to use an Agent Kafka adapter for consumers?

– How to use an Agent Kafka adapter for Producer?